Getting (and keeping) your website pages indexed in Google can be a challenge for new websites and for websites with technical SEO or content quality issues. This article is designed to help you uncover potential reasons why you might be having trouble with Google not indexing your site. Sometimes the issues can be a quick fix, however, there are times when you must dig deeper to uncover the true cause of Google not indexing all of your web pages.

How to Check Google’s Index of Your Site

In order to first determine that your page (or entire site) is not indexed within Google, follow these steps:

- Use the “site:domain.com” query such as this example: site:kernmedia.com. This will show you most (but likely not all) URLs that Google has indexed in its search engine for a domain. Google may not show all pages indexed on your site with this query due to the fact that the query could be done at any number of Google’s data centers, which all have somewhat different indexes. You may see more or less URLs indexed for your site with this query on any given day. Tip: Add “www” before your domain if you default to the “www” subdomain version of your website. This will show you only URLs indexed for that subdomain.

- Use the “site:domain inurl:<slug>” query such as this example: site:kernmedia.com inurl:google-not-indexing-site. This will show you whether Google has a specific page indexed.

- Use the “site:domain filetype:<filetype>” query such as this example: site:kernmedia.com filetype:xml. This will show you whether Google has a page with a specific file type indexed for your site.

- Check “Index Status” in Google Search Console. This handy report will allow you to see (at a glance) how many pages on your website are indexed in Google’s search engine. It can also show you how many URLs are blocked or have been removed. All three metrics are shown via a graph, over the course of a year, which can be helpful for monitoring your site’s indexation in Google over time.

- Check “Sitemaps” in Google Search Console. This is another helpful report, which will show you how many pages in your XML sitemap have been submitted to Google, and how many are indexed. Similar to the Index Status report, this tool will show you a timeline of sitemap URL indexation over a month’s time (instead of a year).

Find more suggestions on Google search operators here.

Common Reasons Why Google is Not Indexing Your Site

1. Response Codes Other than 200 (OK)

Perhaps it goes without saying, but if your pages don’t produce a 200 (OK) server response code, then don’t expect search engines to index them (or keep them indexed if they once were). Sometimes URLs accidentally get redirected, produce 404 0r 500 errors depending on CMS issues, server issues, or user error. Do a quick check to ensure that the URL for your page is loading properly. If it loads, and you see it, you’re probably fine. But, you can always run URLs through HTTPStatus.io to verify. Here’s what that looks like:

2. Blocked via Robots.txt

Your website’s /robots.txt file (located at http://www.domain.com/robots.txt, for example) gives Google its crawl commands. If a particular page on your site is missing from Google’s index, this is one of the first places to check. Google may show a message reading “A description for this result is not available because of this site’s robots.txt” under a URL if it previously indexed a page on your site that is now blocked via robots.txt. Here is what that looks like:

Check out my article on How to Write a Robots.txt File for more information on optimizing this important element of your SEO efforts.

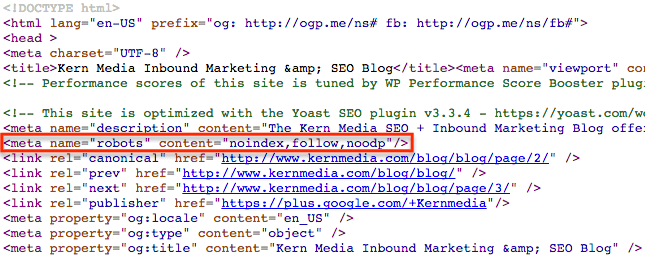

3. “Noindex” Meta Robots Tag

Another common reason why a page on your site may not be indexed in Google is that it may have a “noindex” meta robots tag of sorts in the <head> of the page. When Google sees this meta robots tag, it’s a clear directive that it should not index the page. Google will always respect this command, and it can come in a number of forms depending on how its coded:

- noindex,follow

- noindex,nofollow

- noindex,follow,noodp

- noinde,nofollow,noodp

- noindex

Here is a screenshot of what it looks like in the <head> of a page:

To check to see whether your page has a “noindex” meta robots tag, view the source code and look for the code in the <head>. If your website is rendered with javascript, then you may need to use the “Inspect Element” feature of Google Chrome to view the <head> properly. More info here.

4. “Noindex” X-Robots Tag

Similar to a meta robots tag, an X-robots tag offers the ability to control indexation with Google via a page-level tag. However, this tag is used in the header response of a particular page or document. It is commonly used on non-HTML pages where there is no <head>, such as PDF files, DOC files, and other files that webmasters wish to keep out of Google’s index. It’s unlikely that a “noindex” X-robots tag has been accidentally applied, however you can check using the SEO Site Tools extension for Chrome. Here is a screenshot of what it looks like.

5. Internal Duplicates

Internal content duplication is a risk to any SEO efforts. Internal duplicate content may or may not keep your pages out of Google’s index, but large ratios of internal duplicate content on your pages will likely keep them from ranking well. If you have a particular page that has a large amount of similar content with another page on your website, it’s possible that this could be the reason that your page is either not indexed in Google or simply not ranking well.

To check for internal duplicate content, I recommend using the Siteliner tool to crawl your website. It will report all pages with internal duplicated content, highlighting content that is duplicated for easy reference, and also offer you a simple graphical view of how much content is duplicated on your website.

Google clearly states here that websites should minimize similar content. It’s possible that pages on your site with very similar content could still rank to some degree, however, pages with exactly the same content will likely be filtered out of Google’s immediate search results. They may be omitted from search results under a notice such as the following.

6. External Duplicates

External duplicate content is what you might expect…content duplicated with other websites. Large ratios of duplicate content are a sure sign of low quality to Google, and should be avoided at all costs. No matter whether your website is a lead generation marketing site, E-Commerce store, online publishing platform, or hobbyist blog…the same rules apply.

One way to tell if your content is duplicated with other sites is to put a snippet of content in quotes and search Google, such as this example, which shows that Home Depot’s product description is duplicated with a number of other websites. Note: Due to Home Depot’s brand authority, review content and other factors, they are likely to still rank well in Google’s search results with duplicate content. However, less authoritative sites may not be indexed fully nor rank well with duplicate content such as manufacturer-supplied product descriptions.

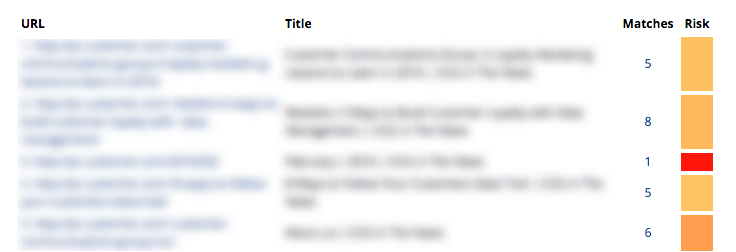

To check for external duplicate content, I recommend using Copyscape to either crawl your sitemap or a specific set of URLs. This tool will provide a very helpful (and exportable) report on your site’s duplication with external sites. Here’s a screenshot of what it looks like (with my client’s URL and Title information blurred out for privacy).

7. Overall Lack of Value to Google’s Index

It’s also possible that a particular page, or your website as a whole, could suck so bad that it simply doesn’t provide enough value to Google’s index. For example, affiliate sites that have nothing but dynamically-generated ads offer little value to the user. Google has refined its algorithm to avoid ranking (and sometimes avoid indexing) sites like these. If you’re concerned about your site quality, take a close look at the unique value that it provides to Google’s index, which is not already provided by other websites.

8. Your Website is Still New & Unproven

New websites don’t get magically indexed by Google and other search engines quickly. It takes links and other signals for Google to index and rank a website (visibly) in its search results. This is why link building is so important for new websites, especially.

9. Page Load Time

If your site has pages that load slowly, and they’re not fixed, Google will likely drop the ranking over time and the page could even fall out of its search engine index. Typically, the page will simply drop in the rankings, but that’s nearly as bad as not being indexed at all.

To check for page load time, you can use Google’s Page Speed Insights tool or the GTMetrix tool. Here’s a screenshot showing an example of a report that Google’s tool provides.

10. Orphaned Pages

Google crawls your website (and XML sitemap) to find links to your content, update its index and influence your site’s rankings in its search results (amongst other factors). If Google cannot find links to your content, either on your site or an external site, then it doesn’t exist to Google. It won’t be indexed. Pages without internal links are referred to as “orphaned pages,” and they can be a reason for reduced indexation in Google. To determine if your page(s) are discoverable, it’s recommended to crawl your site with a tool such as Screaming Frog. and then search for specific URLs in question. Here’s an example of what that looks like.

A more robust way to check for orphaned pages would be to export the URLs from the Screaming Frog crawl and prepare a spreadsheet that syncs the URLs with your XML sitemap (assuming it’s accurate). This will allow you to immediately identify all URLs, which are included in your XML sitemap, but were not discovered during the crawl. Keep in mind that your crawl settings can dictate which URLs are crawled, so proper experience with this tool is recommended.

When in Doubt, Ask for Help

For some people, this stuff is simply too technical and it’s best to seek consultation from an SEO specialist…like me 🙂 If you’re stuck, you need to determine how valuable your time is. Spending late nights attempting to address Google indexation and ranking will get tiresome. Remember that indexation does not equate to optimal ranking. Once Google has indexed your site, your content quality, link profile and other site and brand signals will determine how well your website ranks. But, indexation is the first step in your SEO journey.